Stopping the Spike: Anthropic’s Pre-Paid Power Plan

📰 The Scoop: Anthropic has officially moved to a front-funded energy model, pledging to cover infrastructure and market price increases before they ever reach local residents. According to their February 11, 2026, policy update, the company will pay utility providers directly for the grid upgrades and power premiums their data centers require, effectively insulating neighbors from AI-driven rate hikes.

🧠 What This Means: Traditionally, when a massive power-user like a data center moves into a town, the cost of the new wires and substations is shared by every local resident through a slow increase in monthly rates. Anthropic is flipping the script: they are paying for 100% of those interconnection costs upfront. Instead of the community subsidizing the AI company's growth, the AI company is essentially donating high-capacity infrastructure to the local grid to power their massive data centers.

🔎 Why It Matters To You:

Preventative Pricing: The goal is for your utility bill to stay flat. By paying the utility company directly, Anthropic ensures there is no uncovered cost for the utility to pass on to you.

Grid Reliability: Because Anthropic is funding modern, high-capacity equipment that they share with the town, your local power grid might actually become more stable than it was before they arrived.

Smart Throttling: Anthropic has committed to "curtailment", slowing down their AI training during heatwaves or peak hours, to prevent market prices from hitting emergency levels for everyone else.

The "Fair Play" Standard: This sets a massive precedent. In a 2026 landscape where the federal government is pressuring tech giants to "pay their own way," this proactive model makes it much harder for competitors to offload their costs onto the public.

🔮 Looking Ahead: Expect this "pre-paid" infrastructure model to become a mandatory requirement for new data center permits across the country. As the AI Arms Race continues, the cost of these community protections will likely be reflected in AI subscription fees rather than your monthly power bill.

Silicon Prairie: Meta’s $10 Billion Bet on Indiana

📰 The Scoop: Meta has officially broken ground on a massive, 1,500-acre data center campus in Lebanon, Indiana. Announced on February 11, 2026, this $10 billion project is one of the company’s largest infrastructure investments ever. While the internet is busy making jokes about "AI being grown in cornfields," the facility is a high-tech powerhouse designed to deliver a staggering 1 gigawatt (1GW) of capacity to fuel Meta’s next generation of "Personal Superintelligence."

🧠 What This Means: Meta is doubling down on a rural-first strategy for AI. By moving away from overcrowded tech hubs like Silicon Valley and Northern Virginia, they are tapping into the vast space and supportive utility partnerships of the Midwest. This isn't just a warehouse for servers; it’s a purpose-built factory for training the massive AI models that will eventually power your Instagram, Facebook, and WhatsApp experiences.

🔎 Why It Matters To You:

Faster Social AI: With 1GW of dedicated power, Meta’s AI features, from real-time video translation to advanced digital assistants, will likely become significantly faster and more responsive.

Economic Influx: The project is expected to create 4,000 construction jobs and 300 permanent roles, potentially transforming Lebanon from a quiet farming town into a critical hub for the global digital economy.

Community Energy Protections: In a move mirroring the current trend of AI corporate responsibility, Meta is donating $1 million annually for the next 20 years to help local Boone County families pay their energy bills.

Infrastructure Upgrades: Meta is footing a $120 million bill for local road, water, and utility improvements, meaning the town gets modern infrastructure that the company, not the taxpayer, is paying for.

🔮 Looking Ahead: Despite the economic benefits, tech analysts warn that the sheer scale of the 1,500-acre site could still put pressure on local resources. As more tech giants look to the "Silicon Prairie," expect to see more rural communities demanding these Gigawatt-scale investments come with ironclad guarantees for local energy and water protection.

Google’s "Digital Shield" for Global Democracy

📰 The Scoop: Google has launched a comprehensive full-stack security initiative aimed at protecting democratic institutions from the growing threat of AI-driven cyber attacks and misinformation. Unveiled at the February 2026 Munich Security Conference, the plan centers on a new whitepaper, "Staying Ahead of the Shadows," which outlines how the tech giant is deploying AI-powered tools like CodeMender and the Secure AI Framework (SAIF) to bolster digital resilience. The announcement has exploded on social media, with thousands of users praising Google for stepping up as a digital bodyguard for the 2026 election cycle.

🧠 What This Means: Google is moving beyond just flagging bad content; they are fortifying the actual plumbing of democracy. By partnering with defense organizations like NATO and the U.S. Department of War, Google is using its AI to hunt for vulnerabilities in election infrastructure and provide "sovereign cloud" solutions that keep government data secure from foreign interference. It’s essentially an upgrade from a basic antivirus to a high-tech, AI-monitored security perimeter.

🔎 Why It Matters To You:

Pre-emptive Protection: Tools like SynthID are being scaled to watermark AI content, making it much harder for deepfakes of local candidates to trick you on your feed.

Secure Infrastructure: Your voting data and local government services are being shifted to "Air-Gapped" cloud systems, designed to stay online even during massive state-sponsored cyber attacks.

High-Quality Info: Gemini is being tuned to prioritize authoritative public service information, ensuring that when you ask "where do I vote?", you get a verified answer instead of AI-generated junk.

The Skeptic’s Corner: While the tools are powerful, critics argue this gives a single corporation massive power to define what is a threat versus what is protected speech.

🔮 Looking Ahead: As the 2026 midterms approach, Google’s "Democracy Shield" will likely become the blueprint for how tech giants interact with governments. The big question remains: can an AI-driven defense outpace the AI-driven attacks being developed by bad actors?

Your Ads Are About to Get Scary Smart (Again)

📰 The Scoop: Google outlined their vision for digital advertising in 2026, promising hyper-personalized ads powered by advanced AI that can predict what you want before you know it yourself, according to their commerce blog update. Instead of just giving you a list of links to click on, the search engine will now find the product and let you buy it instantly without ever leaving the page. The plan comes as privacy concerns about AI-powered advertising reach new heights.

🧠 What This Means: Remember how creepy it felt when ads started following you around the internet? That was just the beginning. Now AI will analyze everything from your browsing patterns to your typing speed to serve ads that feel almost psychic in their accuracy. Think of it like this: rather than you doing the legwork of visiting five different websites to find a pair of shoes, the AI does the shopping for you. It looks at your past habits to guess your style, finds a Direct Offer (a special coupon made just for you), and puts a Buy Now button right in your chat. It’s moving away from being a search engine and toward being an action engine.

🔎 Why It Matters To You:

Ads will become incredibly relevant to your actual needs, potentially saving you time finding products you actually want.

Your online privacy will face new challenges as AI gets better at building detailed profiles of your behavior and preferences.

Shopping experiences could become seamless and personalized, but also potentially manipulative in unprecedented ways.

You might find yourself buying things you didn't plan to, as AI gets better at identifying and triggering your purchasing decisions.

🔮 Looking Ahead: Marketing experts like Sarah Stemen note that this shift makes the search query almost obsolete as a command. By the end of 2026, most of your shopping might happen entirely inside a conversation with an AI. As AI agents begin shopping on behalf of humans, the next battleground won't be for your clicks, but for the trust of your digital assistant. Expect a massive push for new privacy regulations as ads move from your screen into your “thinking process.”

ChatGPT Gets "Lockdown Mode" to Block Risky Requests

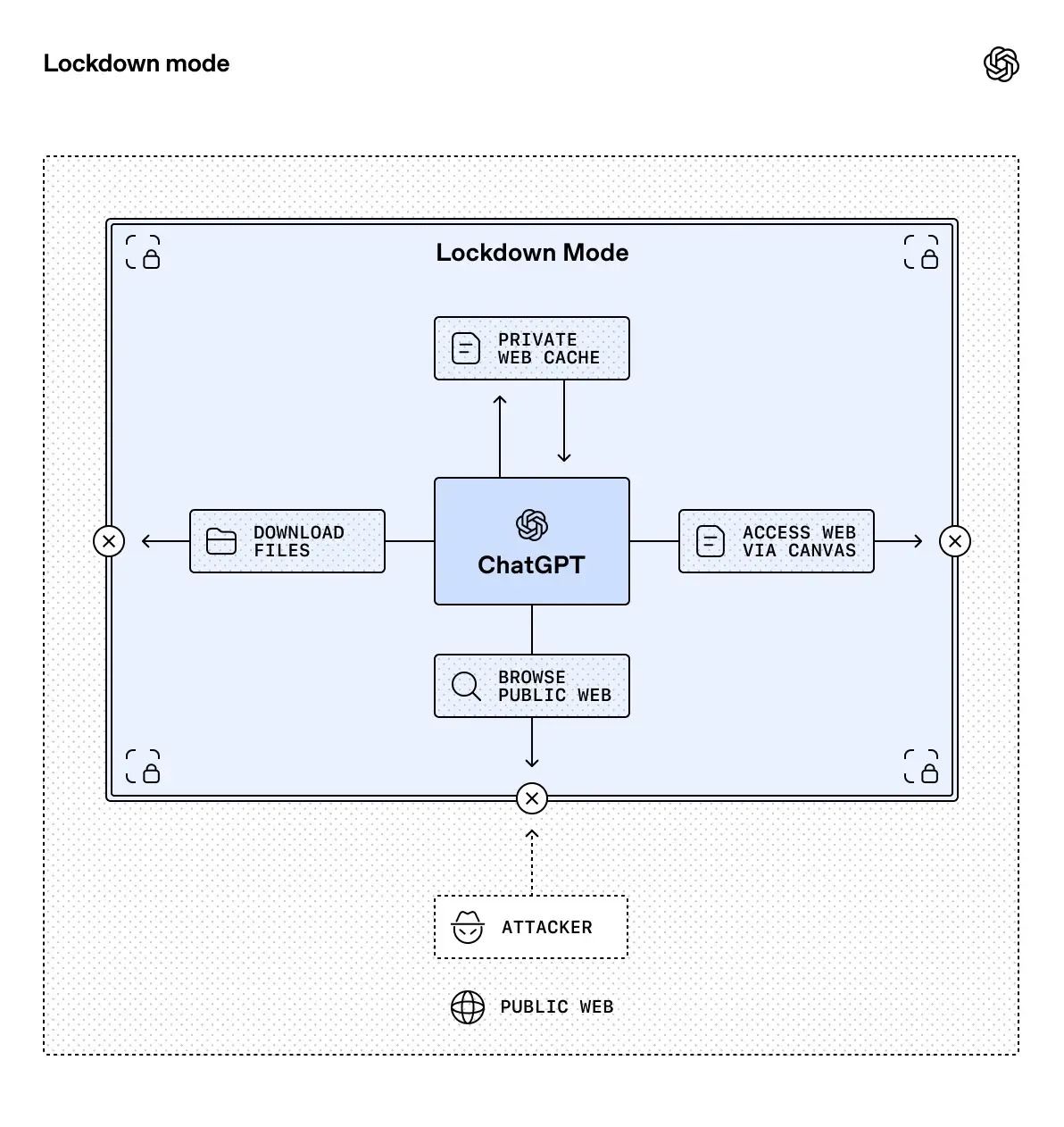

📰 The Scoop: OpenAI has introduced two new security tools for ChatGPT: "Lockdown Mode" and "Elevated Risk" labels. These features are designed to protect users from prompt injection, a type of attack where hackers try to trick the AI into leaking private information or following malicious instructions. While Lockdown Mode acts like a high-security vault for sensitive data, the new labels serve as clear warning signs when users enable features that could potentially expose their information.

🧠 What This Means: Lockdown Mode is a defense-first setting that limits what ChatGPT can do behind the scenes. For example, it restricts the AI’s ability to browse the live web, forcing it to use safer, pre-approved versions of websites instead. The "Elevated Risk" labels are essentially standardized warnings that pop up to make sure you know exactly when you're using a tool that might make your data more vulnerable to outside interference.

🔎 Why It Matters To You:

High-profile users, like business leaders or teachers, can now opt into a much stricter security environment to protect their proprietary work.

You’ll have a clearer heads up via labels whenever you use advanced features (like connecting ChatGPT to your apps) that carry higher security stakes.

It shifts the responsibility toward the user, providing you with the tools to decide how much risk you're willing to take in exchange for more powerful AI features.

This signals a move away from simple filters toward deeper, technical security controls that actually change how the software functions.

🔮 Looking Ahead: Initially, these features are rolling out to business, education, and healthcare users, with consumer access expected in the coming months. As AI becomes more agentic, meaning it can do things like book flights or edit your files, expect these types of manual safety switches to become a standard part of how we interact with technology.

Wanting to learn more about AI? Visit aitechexplained.com

Forward to a friend who will find this useful.

This newsletter is generated with the assistance of AI under human oversight for accuracy and tone.